The False Dichotomy: Rehost vs. Rewrite

When organizations decide to modernize, they are frequently presented with a paralyzing binary choice: move everything exactly as is, or burn it down and start from scratch. This all-or-nothing mindset is responsible for countless stalled migrations and blown budgets. To find a viable path forward, we must first understand why these two extremes rarely deliver on their promises.

On one side sits the "Lift-and-Shift" (Rehost) strategy. It appeals to the desire for speed, promising a quick exit from the data center. However, moving a legacy monolith to the cloud without refactoring essentially transfers your on-premise technical debt to a new zip code. You inherit all the operational overhead of the cloud without gaining its primary advantages, such as auto-scaling, self-healing resilience, and granular cost control. Instead of a modern, agile system, you end up with the same fragile architecture, now running on expensive rented infrastructure.

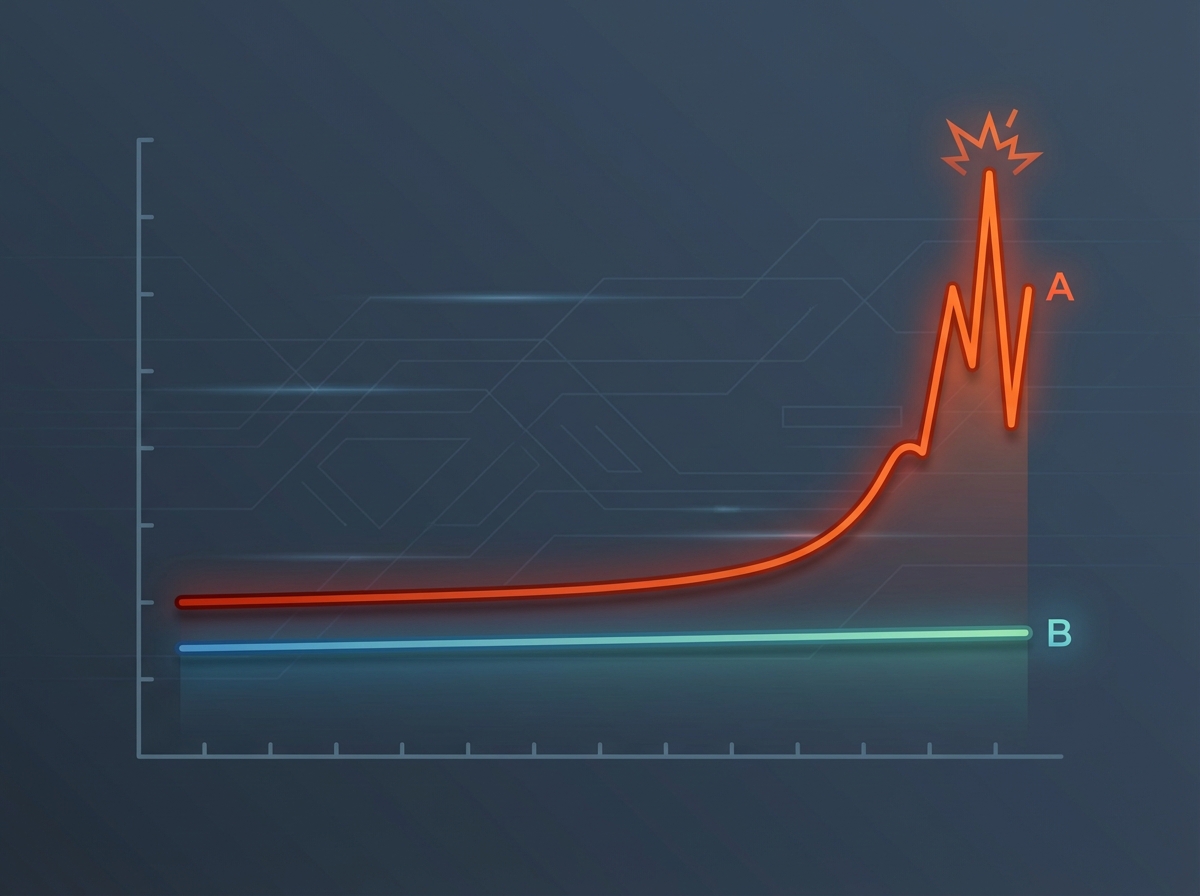

On the other side lies "The Big Bang Rewrite." This approach seduces engineering teams with the allure of a blank canvas—a chance to banish spaghetti code forever. Yet, this strategy is fraught with existential risk. Rewriting a complex production system from scratch inevitably leads to dangerous outcomes:

- The "Dark" Period: A multi-year phase where the team stops shipping new features to customers to focus solely on achieving feature parity.

- Lost Domain Knowledge: Subtle business logic embedded in the legacy code is often missed or misunderstood during the translation.

- The Moving Target: By the time the rewrite is finally released, market requirements have often shifted, rendering the "new" system obsolete on day one.

Viewing modernization as a choice between these two failures is a strategic error. Incrementalism isn’t just a preference for caution; it is a necessity for risk control. By rejecting this binary choice, architects can adopt a strangler pattern that allows value to flow continuously while the system evolves, mitigating the paralysis of the rewrite and the inefficiency of the rehost.

The Strangler Fig Pattern: Modernization by Interception

Named by Martin Fowler after the rainforest figs that seed in the upper branches of a host tree and grow downward, this pattern offers a compelling alternative to the high-risk "Big Bang" rewrite. Instead of shutting down the legacy system to build a replacement from scratch, you wrap the old system with a new application layer, gradually letting the new growth take over.

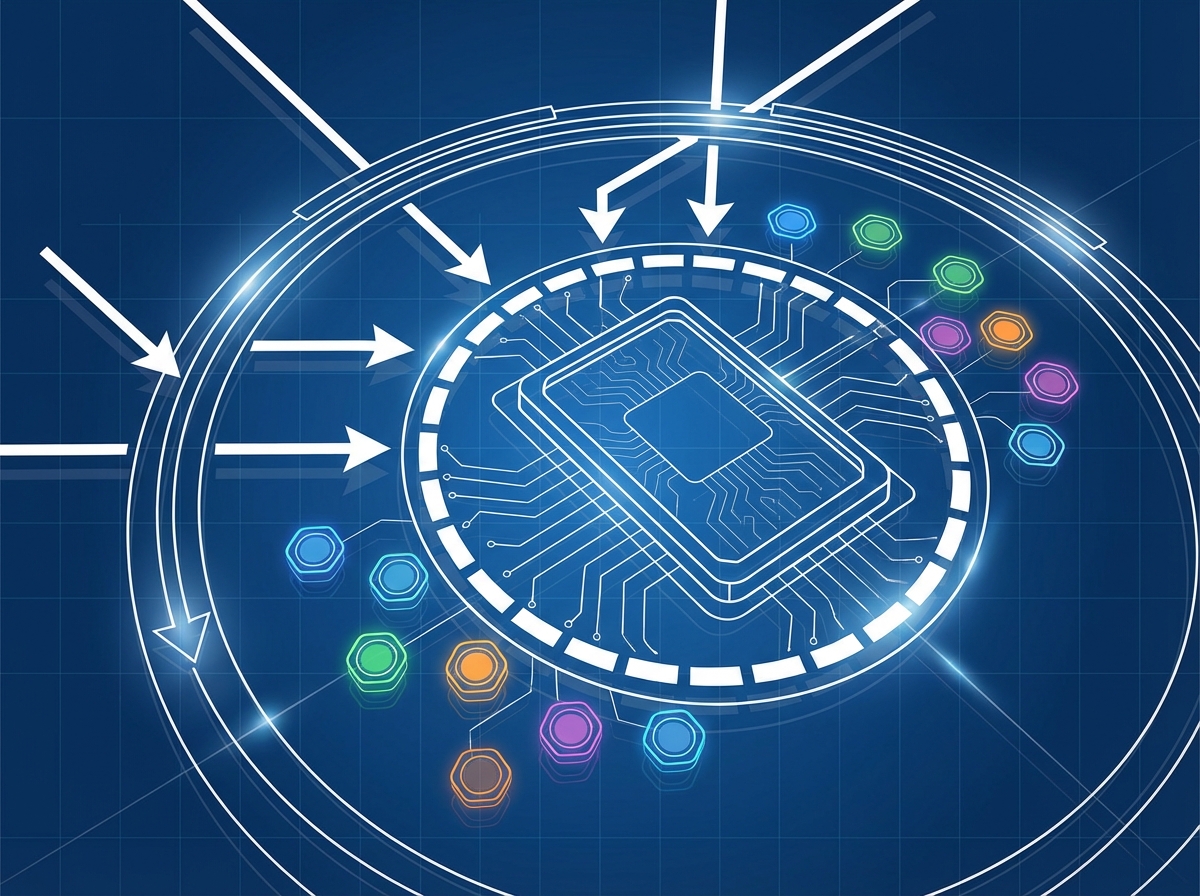

The mechanism relies on interception. You place an API gateway or a routing facade in front of your existing monolith. At first, this proxy routes all traffic to the legacy application. When you are ready to modernize a specific slice of functionality—such as the "User Profile" module—you build it as a new, cloud-native microservice. The proxy is then updated to intercept calls destined for user profiles and route them to the new service, while everything else continues to flow to the monolith.

Crucially, this transition is invisible to the end user. As far as the customer or the client application is concerned, the API endpoint remains consistent. Behind the curtain, you are dismantling the monolith piece by piece, but the external interface never breaks.

Beyond the technical advantages, this approach offers a massive psychological benefit for engineering teams. In a traditional rewrite, developers might work for eighteen months before a "Cutover Day" that is fraught with terror and potential rollback. With the Strangler Fig pattern, teams can ship tangible value immediately. They get the satisfaction of releasing production-ready code early in the process, validating their cloud-native architecture incrementally rather than holding their breath for a release date that keeps slipping.

Solving the Data Gravity Problem

Moving stateless application logic is relatively straightforward. You can package code into containers and orchestrate them with Kubernetes fairly quickly, but the real challenge—the anchor dragging down most modernization efforts—is the database. While code is fluid, state is sticky. This phenomenon is often referred to as "data gravity," and it requires a much more cautious approach than simply ripping tables out of a schema.

The golden rule of strangling a monolith is that the database split usually follows the service split. Attempting to dismantle a monolithic database before the application logic has been successfully decoupled is a recipe for data corruption and distributed transaction nightmares. Instead, you need architectural patterns that allow data to reside in—or sync between—two places during the transition phase.

To achieve this decoupling without downtime, architects typically rely on specific synchronization strategies:

- Change Data Capture (CDC): This is often the safest route. By reading the transaction logs of your legacy database, you can stream changes in near real-time to the new microservice’s database. This allows the new service to possess its own read-optimized data store while the monolith remains the system of record until the final cutover.

- Dual Write: In this approach, the application layer writes data to both the old and new databases simultaneously. While effective for immediate consistency, it introduces significant complexity regarding transaction management and error handling if one of the writes fails.

By employing these techniques, you ensure that the application logic drives the modernization process. Once the microservice is stable and the data synchronization is proven accurate, you can finally sever the link to the old schema, effectively overcoming the gravitational pull of the legacy system.

Implementing the Facade: The Role of the API Gateway

To successfully replace a running engine without landing the plane, you need a mechanism to divert fuel to the new components without the pilot ever noticing. In a cloud-native migration, this role is played by the API Gateway. Acting as a sophisticated traffic cop, the gateway sits between your client applications and your backend, effectively decoupling the public interface from the underlying implementation. By configuring routing rules at this layer, you can transparently direct specific API calls—such as /api/v1/users—to your legacy monolith, while routing a newly extracted path like /api/v1/notifications to a modern microservice.

The success of this routing strategy relies heavily on identifying the right "seams" within your existing architecture. You generally shouldn't start by ripping out the complex, high-dependency core of the application. Instead, analyze the monolith to find areas of low coupling—distinct functionalities that can be peeled off with minimal entanglement. Ideal candidates for these initial extraction points often include:

- Stateless Utilities: Services like PDF generation, image resizing, or email dispatchers that don't maintain complex persistence.

- Read-Only Data: Reporting modules or dashboards that query data but rarely modify the core transactional state.

- Edge Domains: Functionalities like user profiles or third-party integrations that sit on the periphery of the business logic.

However, routing traffic is only half the battle; ensuring payload compatibility is the other. This is where contract testing becomes non-negotiable. Your new microservice might utilize a modern tech stack and cleaner architecture, but if it returns a JSON object that differs even slightly from what the legacy client expects, the application will break. Before flipping the switch at the gateway level, you must implement rigorous contract tests to verify that the new service acts as a perfect drop-in replacement, honoring the exact data structures, headers, and error codes defined by the legacy system.