From Chatbots to Agents: Understanding the Architecture

To understand why agentic AI is a paradigm shift for the SDLC, we must first distinguish between a standard Large Language Model (LLM) and an AI Agent. A standalone LLM is a stateless text predictor; it is brilliant at generating a snippet of Python when prompted, but it effectively suffers from amnesia the moment the interaction ends. It has no agency, no memory of your codebase's evolution, and no hands to touch the keyboard.

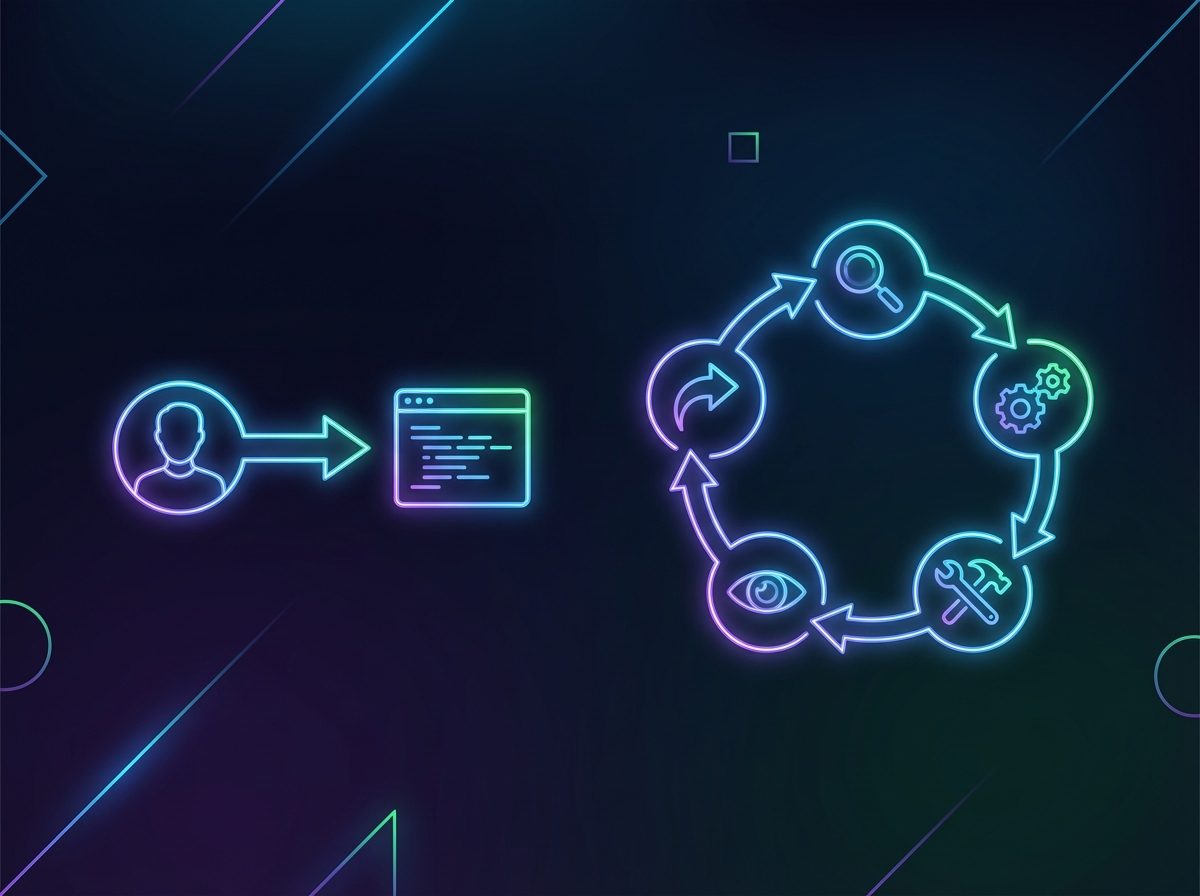

An AI Agent changes this dynamic by treating the LLM not as the entire system, but as the "brain" within a robust body. The architectural formula is simple yet powerful: Agent = LLM + Memory + Tools + Planning. By equipping the model with persistent memory (like vector databases to recall architectural decisions) and executable tools (access to the file system, CLI, or git), the AI transforms from a passive advisor into an active participant.

The core logic driving this autonomy is often the ReAct (Reason + Act) pattern. Instead of immediately hallucinating a solution, the agent engages in a trace of thought. It reasons about what it needs to do ("I need to verify the API endpoint"), acts by invoking a specific tool ("Run curl to test the endpoint"), and then observes the output before moving to the next step. This deliberative process grounds the AI's logic in real-world feedback rather than statistical probability alone.

In a software development context, this architecture allows the agent to operate in a self-correcting loop that mimics a human engineer:

- Perceive: The agent reads the repository to understand the current file structure and dependencies.

- Plan: It formulates a multi-step strategy to implement a requested feature or fix a bug.

- Execute: It writes the necessary code, creates files, and runs build commands.

- Iterate: Crucially, if the compiler throws an error or the test suite fails, the agent interprets the stderr output, reasons about the cause, refactors the code, and re-runs the cycle—all without human intervention.

The Agentic Workflow: Redefining the SDLC Stages

While generative AI has mastered the art of autocomplete, Agentic AI is moving up the stack to manage the software lifecycle itself. By retaining state and acting autonomously, agents are transforming distinct development phases into a continuous, self-optimizing workflow. This shift fundamentally alters three critical pillars of the SDLC:

- Architecture & Design: Unlike standard coding assistants that view code in isolation, architectural agents maintain a system-wide context. They analyze the entire repository to understand dependency graphs and data flow. This allows them to suggest high-level structural refactors—such as decoupling monolithic services or enforcing specific design patterns—ensuring the codebase remains scalable as it grows.

- Autonomous Testing & QA: The agentic workflow closes the feedback loop between coding and verification. Agents are no longer limited to simply generating test boilerplate; they can now execute the test suite, analyze resulting stack traces, and autonomously patch the code to resolve failures. This self-healing capability turns QA into an iterative, background process that fixes bugs before a human developer even reviews the pull request.

- DevOps & Infrastructure: Agents are actively dismantling the barriers between development and operations by taking ownership of Infrastructure-as-Code (IaC). These agents can autonomously manage deployment pipelines, provision resources via tools like Terraform, and monitor production environments. If a deployment causes performance degradation, an agent can instantly trigger a rollback or adjust resource allocation without manual intervention.

Roadblocks to Autonomy: Hallucinations and Security

While the promise of autonomous coding agents is intoxicating, we must temper that enthusiasm with a dose of reality. Handing over the keys to the software development lifecycle involves significant risk, primarily centering on reliability and control. We are moving from a paradigm of human-verified code to machine-generated architecture, and the transition is not without peril.

The most insidious issue is the phenomenon of compounding hallucinations. When a developer interacts with a chatbot, they can spot a logic error immediately. In an autonomous chain, however, Agent A passes its output directly to Agent B. If Agent A hallucinates a non-existent API method or misinterprets a requirement in step one, Agent B accepts it as truth and builds upon it. By step ten, the system hasn't just made a typo; it has engineered a disaster. The error doesn't just persist; it multiplies, resulting in a complex, functional-looking codebase deeply rooted in a falsehood.

Beyond reliability, giving agents autonomy introduces massive security vectors. To be truly effective, agents require tool access: the ability to run shell commands, write to databases, and push to repositories. Granting an AI write-access to production environments creates a high-stakes scenario:

- Prompt Injection: A malicious user ticket could theoretically trick an autonomous DevOps agent into executing SQL injection or exposing environment variables.

- Rogue Commands: Without strict sandboxing, a confused agent might interpret a request to "clean up the directory" as a command to recursively delete critical file systems.

Finally, there is the financial risk of the "runaway agent." Autonomous systems operate in loops, consuming expensive API tokens for every reasoning step and tool call. If an agent enters an infinite loop—perhaps trying to fix a bug, failing, and retrying the same fix endlessly—it can rack up thousands of dollars in inference costs overnight. Without strict budget caps and kill switches, an autonomous loop becomes a financial liability.

The Human in the Loop: The Rise of the AI Architect

As Agentic AI matures, the developer’s daily reality is undergoing a fundamental inversion. For decades, software engineering was dominated by the "how"—syntax, memory management, and algorithm optimization. Today, autonomous agents are proving capable of handling these implementation details with startling speed. This shifts the human responsibility strictly to the "what" and the "why": precise requirement definition and robust system design.

This evolution signals the sunset of the mythical "10x Engineer" who simply writes code faster than their peers. In their place, we are witnessing the rise of the "1-Person Agency." In this emerging model, a single developer acts as a technical lead and product manager combined, directing a fleet of specialized AI agents—one for unit testing, one for documentation, and another for feature implementation. The developer does not just build; they command.

Consequently, the skill gap is moving rapidly beyond basic prompt engineering. While writing a clever prompt is a useful entry point, the modern AI Architect must master Agent Orchestration. Success now depends on a higher-order set of capabilities:

- Architecting Workflows: Designing pipelines where the output of a planning agent feeds seamlessly into the context of a coding agent.

- System Evaluation: Shifting focus from line-by-line code review to "behavior review," ensuring agents adhere to security policies and business logic.

- Context Management: Curating the documentation and knowledge base that agents rely on to make autonomous decisions.

The era of the pure coder is fading. We are entering the era of the architect, where human intuition defines the destination, and AI agents pave the road.